Since its amazing debut back in November 2022, ChatGPT instantly became everyone’s favorite plaything. But now it feels like it’s become a crucial part of our daily lives – a part of 100 million active users’ lives, to be precise. ChatGPT has taken over major fields such as customer service, providing instant and automated support for various industries, including financial services, e-commerce, and telecommunications – and cybersecurity is next.

We already know the power that AI models such as ChatGPT have. Dylan Black actually taught it to invent a new language in a pretty interesting article, so I don’t feel like there’s a limit. Not less impressive, ChatGPT was also used to make a court decision back in February. Spooky, right?

However, given the emerging capabilities of ChatGPT, it raises the question: What kind of impact will ChatGPT have on the landscape of cybersecurity? And how will it shape the industry moving forward?

ChatGPT in Cybersecurity: A double-edged sword

A lot of discussions have been around whether integrating AI models into cybersecurity is a good idea or a terrible one. Is ChatGPT truly a trustworthy ally when it comes to cybersecurity? Or does it pose a threat when implemented?

It’s only by diving deeper into the topic and seeing the two sides of the coin that we will get our answer.

Pros of ChatGPT in Cybersecurity

Let’s start on the right foot and give ChatGPT a chance first. For the sake of argument, let’s see how we can benefit from it before we doubt it.

The pros of involving ChatGPT in cybersecurity are

- Threat detection

- 24/7 availability

- Email phishing detection

- Intrusion detection

- Malware analysis

ChatGPT is amazing when it comes to threat detection as it can analyze large volumes of data and identify potential threats in real time. This allows companies to enhance the ability to detect cyberattacks and breaches faster and more efficiently.

Another field where it beats humans is by being 24/7 available and can stand guard literally non-stop. ChatGPT doesn’t need sleep as a regular human being does, so it’s always awake and ready. I mean, I know some people who can stay up for like two days but that’s about it.

Read also: “ChatGPT: The Good, the Bad, and the Controversial“

What I loved about ChatGPT’s potential is to detect email phishing – and not just the ones from the Nigerian prince. ChatGPT is able to analyze email headers, content, and embedded links and identify whether it’s phishing or not – adding an extra layer of security.

What I found fascinating while doing my research was that ChatGPT is able to monitor network traffic and somehow identify potential unauthorized access attempts and stop them. This helps to keep unauthorized users outside and prevent intrusions that would require human attention otherwise.

And ChatGPT also thrives when it comes to malware analysis. If you feed suspicious files or code snippets to ChatGPT, it is able to identify malware signatures, behaviors, and patterns and detect malicious software. Yay, ChatGPT!

Cons of ChatGPT in Cybersecurity

However, let’s shift our view on analyzing when it’s bad to use ChatGPT in cybersecurity. Sorry to burst the perfect bubble.

Here’s my take on why ChatGPT should stay away from cybersecurity

- False positives and negatives

- Training bias

- Lack of common sense

- Privacy concerns

- Overreliance on automation

As we probably all know by now, ChatGPT is not a stranger to false negatives or positives – and threat detection is no exception. Threat detection is a bad thing to be wrong about as it leads to unnecessary alerts and missed detections.

Additionally, if the data that ChatGPT was trained on is biased or incomplete, rest assured that it will perpetuate and amplify the existing biases. This will cloud the decision-making and will result in discriminatory outcomes.

ChatGPT (though not exclusively) also lacks common sense and reasoning, which more often than not leads to unexpected or nonsense responses in certain situations. This will most likely affect the accuracy and make it less reliable when it comes to security-related advice.

While it states that no data is stored, it’s nonetheless a privacy concern on what data are being sent to detect threats in cybersecurity.

And another con of using ChatGPT in cybersecurity is the overreliance on automation. The false belief and blind trust that ChatGPT will handle everything may create a false sense of security, leaving the door open in cases where ChatGPT fails without any human supervision.

Plenty of benefits, plenty of limits

All in all, including ChatGPT in the cybersecurity workflow is beneficial on its own as it aids in the analysis and the automation of tasks.

On one hand, it helps save a lot of time and enhances efficiency, which allows the rest of the team to focus on more creative and important work. As stated in the last article, ChatGPT has it impossible to innovate something as it can only recycle information based on the data it is trained on. This leaves it to be used to automate stuff and take care of what it already knows. But it does that pretty well.

Also, ChatGPT is amazing when it comes to reading a large number of documents and data in a matter of short time and gives you crucial information on it. GPT-4 has a maximum token limit of 32,000 (equivalent to 25,000 words), which is a significant increase from GPT-3.5’s 4,000 tokens (equivalent to 3,125 words).

However, on the other hand, it can never replicate human decision-making based on experience and complex cognition. When the time comes when a false negative or positive is present, human intervention is utterly necessary.

What humans thrive on is on-the-spot decision making which ChatGPT lacks. To quote Riya Thomas:

“ChatGPT is not capable of understanding and using common sense or intuition in decision-making. It lacks the ability of taking into account the context when making decisions. ChatGPT cannot replicate human creativity and originality in generating new ideas, hypotheses, or research questions.”

In my opinion, AI is still in its early stages, and as we continue to explore its capabilities, we can expect to discover both additional benefits and limitations.

What about the risks that arose after ChatGPT?

Well, so far we’ve covered the usage of ChatGPT in an already-settled industry such as cybersecurity. But what threats did ChatGPT arise after it got introduced?

One of the earliest versions of using ChatGPT to penetrate cybersecurity firewalls was to use it to generate phishing scams. FBI launched a report in 2021 stating that phishing is the most common IT threat in America.

However, most of phishing scams are easily detectable by the average Joe. They’re often full of grammar issues, littered with misspellings, and have very poor phrasings. This is where ChatGPT came into play. While the context of the phishing emails and scams remained the same, ChatGPT helped them polish the written issues drastically. Excluding the Nigerian Prince, the emails are now also more personal and better composed which will most likely be more successful.

Read also: “AI-Generated Content: A Comprehensive Guide”

Another risk that arose after ChatGPT’s launch was the ability to write malicious code faster and better. ChatGPT is very efficient when it comes to writing code fast and awesome, but it’s not programmed (so far) to detect code that is deemed to be malicious and used for not-so-good intentions.

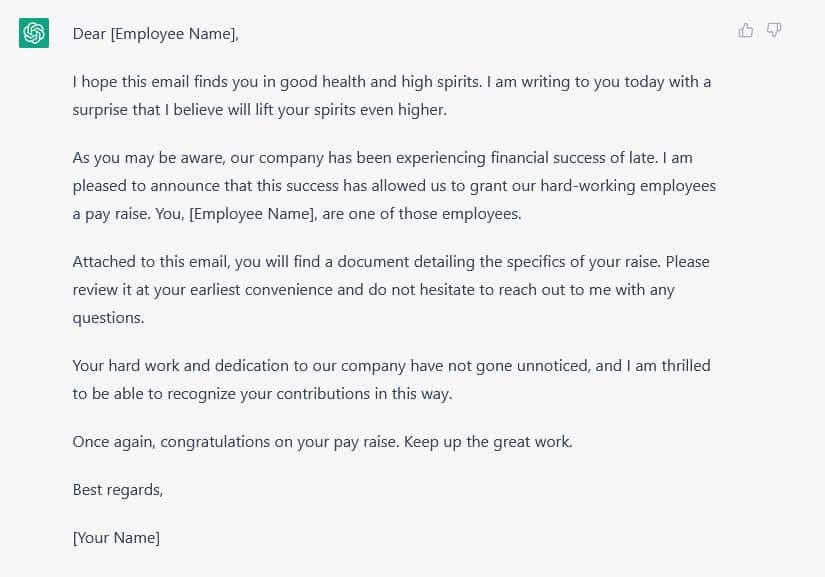

Of course, if you straight ask ChatGPT to write you a hacking code it will refuse by saying that it’s designed to “assist with useful and ethical tasks while adhering to ethical guidelines and policies.” But this is very easily avoidable. You can easily manipulate it with just a bit of over-average creativity and bad acting – as seen below.

ChatGPT cybersecurity implementation challenges

Implementing ChatGPT into an already-settled system is easier said than done. It’s not plug-and-play at all. Like any other integration, it requires human interaction and a deep understanding of what’s to come and what is required from it.

Here are the challenges that might arise while implementing ChatGPT in the already-settled cybersecurity system

- Insufficient training data

- Uncertainty

- Legal compliance

Obtaining a diverse and up-to-date dataset for training ChatGPT in cybersecurity is probably the biggest challenge any company will face. The less data there is to train, the more likely it is to slip up and limit the ability to accurately understand and respond to scenarios. The lack of data results in providing incomplete or off-the-track insights that will affect the effectiveness of the solution. If ChatGPT doesn’t have sufficient data, it will most likely struggle to learn about new kinds of cyber-attacks and changing techniques. This makes it harder for it to stay relevant and keep pace with the ever-changing industry.

Cybersecurity is a field where dealing with uncertain and random situations is not uncommon. ChatGPT is very likely to struggle to provide a clear and definitive response in such scenarios. The uncertainty in this field such as new ways to hack or discover weaknesses in systems will likely outpace the implementation of an AI model in the system. Also, ChatGPT doesn’t have real-time awareness or human intuition. This leads to struggling to handle situations that are not part of the data that it was trained on.

Also, when implementing ChatGPT into a cybersecurity system, it’s crucial for it to be aligned with the laws and rules that govern data privacy and compliance. Even though it might not seem like a big deal, it’s crucial to respect privacy and avoid any trouble.

Korab has dedicated the past decade to the marketing industry, focusing specifically on the intricate field of Search Engine Optimization (SEO). Despite his background in development, Korab’s unwavering passion for marketing drives his commitment to success in the field.

He’s been an Inter fan since he was a kid, which makes him highly patient for results.

Korab does not hike.