In a world where AI is becoming more advanced, the popularity of ChatGPT has made people curious and cautious. There have been questions about whether ChatGPT can be detected by professors or search engines like Google. If yes, what on earth is wrong with it? Why can’t I choose the easy path for once without consequences?

But, the real question everyone has is “Can I use ChatGPT and not get punished?” By ‘punished’ I mean that if a student is caught using ChatGPT, they may face punishment, and if a company is caught using ChatGPT, they can be penalized by Google.

So, put on your explorer’s hat, and let’s dive deeper to know whether ChatGPT can be detected or not! Or something less dramatic.

Can professors tell if you use ChatGPT?

Short answer: Yes and No.

Yes as in “I’ll just paste this in an AI detector and it’ll let me know”. And No as in “AI detectors are not foolproof and make mistakes”.

Just over a month ago, a Texas A&M commerce professor failed an entire class of seniors blocking them from graduating- claiming they all use “Chat GTP”.

To some degree, ChatGPT can be detected. AI detectors are built and designed so that they are trained on data to determine the perplexity of the text, making them good at seeing the origin. It’s best to understand that an AI detector is rather a guide and never a definite tool to determine the authenticity of a text. No detector is foolproof and they all have false positives and false negatives in their results.

However, one can’t simply dismiss all AI detectors as they do a great job nonetheless. They analyze the text and find unusual phrases, technical words, and linguistic patterns that sometimes make the difference between human-written texts and AI-generated ones. The point of AI detectors has never been to evaluate text on professors’ behalf but rather to give them data so they can create information. It falls on the professor’s side to determine whether the text is genuine or not.

So, it’s best to use them along with other evidence before jumping to conclusions about whether someone used ChatGPT or not.

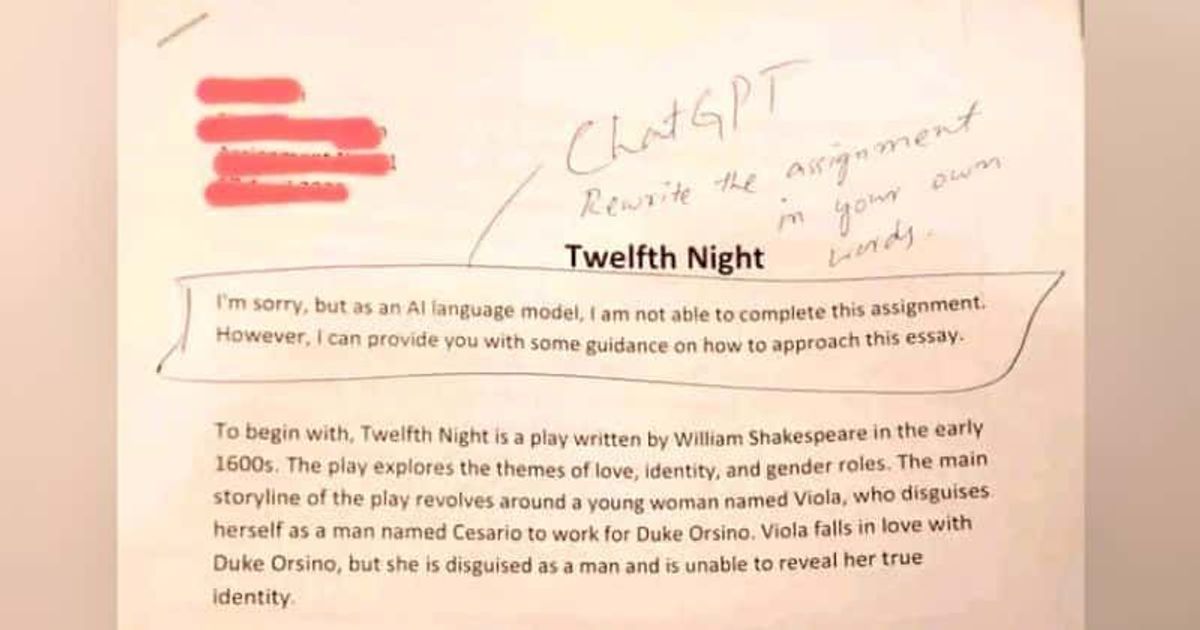

Of course, in some cases, you don’t need any AI detector at all to know it’s AI-generated.

Does Google know if you use ChatGPT?

Google knows if you use AI-generated content – to some degree. That’s obviously a short answer to a heavily loaded question. Let’s dive deeper.

Google’s algorithm is in constant motion and is constantly analyzing written content as it’s mostly what websites offer. The algorithm also checks for poorly written sentences that mostly contain the keyword. For example, if a sentence seems strange or doesn’t make sense to humans but has important words, that’s a clue. And a poor way to do keyword stuffing (hello, it’s not 2009 anymore).

The algorithm also checks for certain patterns and text perplexity that are most common in AI-generated texts. Also, Google has a large dataset and will know if a text was generated simply by scraping information from other sources – as ChatGPT does. If information is recycled and contains linguistic patterns, it’s kind of a clue that it’s AI-generated.

However, newer models like GPT-4 are more advanced and harder to detect. Google is constantly improving its detection methods, but creators of AI tools are also finding ways to bypass detection, creating a cat-and-mouse game.

It’s not that dramatic, though. According to Google’s blog, they ‘reward high-quality content, however, it is produced‘. In their blog, they seem like Google’s stance is that ChatGPT can’t be stopped at this point, and the best way to tackle it is to make people aware of how to use it better.

Of course, copy-pasting stuff from ChatGPT into your page ensures that it will be deranked from Google results as its robotic tone is hard to read, resulting in people leaving your page. People leaving your page often is a sign that your page is not that good, which for Google is enough to derank your page.

Can you tell if someone used ChatGPT?

When it comes to figuring out on your own whether ChatGPT can be detected, there are two ways to do so.

The first way is relying on yourself (like everything else in life, am I right?). On any given text, you can look for hints and clues in the text. If you’ve been around ChatGPT for a while, you’ll notice unusual or needless over-sophisticated language and a writing style that just feels off. More often than not, on social media posts, you can sense the intro of the text and immediately get the feeling that it’s not human-written.

However, you can’t rely only on your senses to know if ChatGPT can be detected.

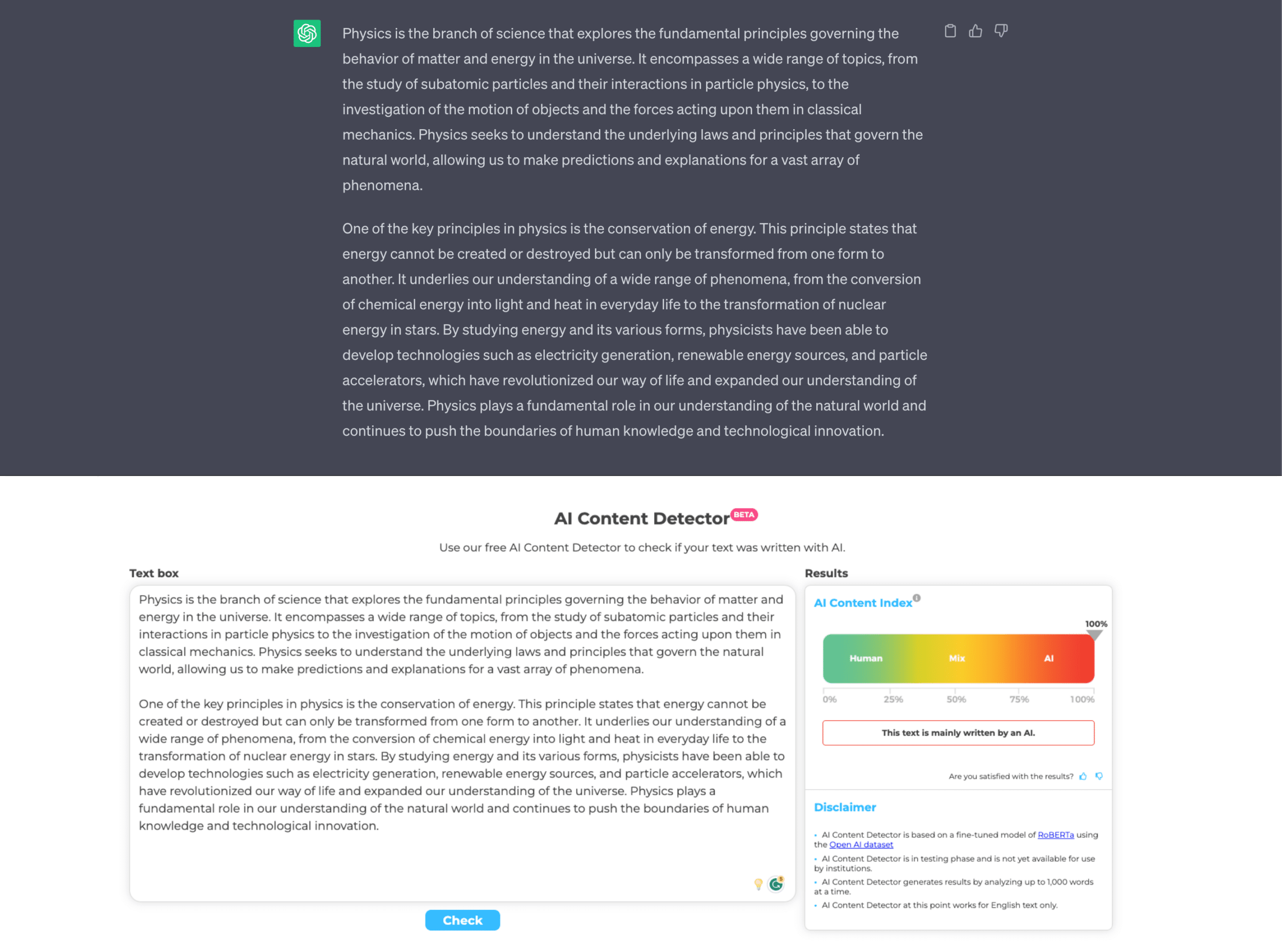

The best way in my opinion is to use an AI detector. AI detectors are specialized tools specifically to detect whether a text contains fragments of ChatGPT. They look for strange sentence structures and the usage of uncommon patterns to determine if a text is genuine or not.

How AI detectors work is by pasting content in the area and clicking a button and voíla – you’ve got an index on whether it’s AI-generated or not. Of course, this is the scenery – let’s go behind the curtain. An AI detector takes a piece of text and breaks it down into sentences to analyze it. There’s something called perplexity which means how ‘easy-to-generate’ a text is. It quantifies the level of difficulty in predicting the next word in a sequence of words in a given context. The lower the perplexity, the more likely it is to be AI-generated.

What are the limitations of ChatGPT?

ChatGPT is far from perfect. Far, far away.

Being still in very early ages of development, there are a lot of issues that still need to be cleared. There have been tons of fun social media posts about how ChatGPT is way off on answers and often makes no sense.

The most crucial limitations of ChatGPT are

- Lack of contextual understanding

- Biased outputs

- Inappropriate or offensive content

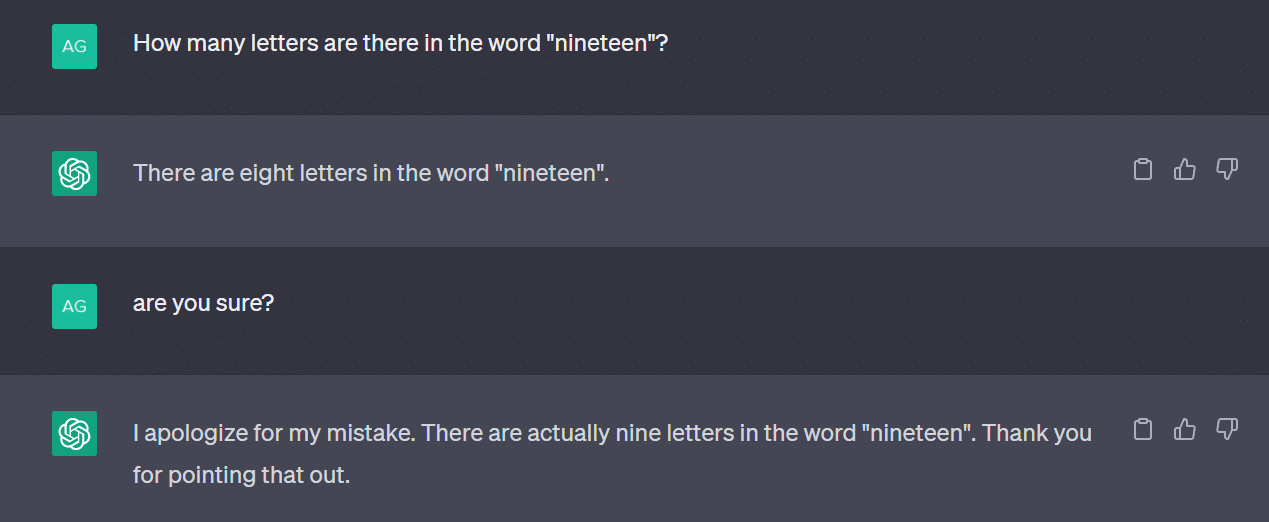

- Limited factual accuracy

While amazing in some areas, contextual understanding is definitely not its best quality. It often struggles to comprehend the context fully and thus resulting in inaccurate and irrelevant responses. I mean, how many times did you have to just ditch a thread with it and start a new one because it was so way off?

And if you believe that a soccer fan is the most biased thing ever, you haven’t met ChatGPT. Some responses are so biased because it learns from human-generated data, which of course may contain inherent bias. Some topics are more nuanced than others, of course so it’s best to keep an eye and an open mind.

Another risk that needs tackling is that ChatGPT can generate inappropriate or offensive responses. As it lacks real-time content filtering and ethical understanding, with the ‘right’ prompt ChatGPT can render some very hurtful and offensive responses.

Similar to human nature, ChatGPT can generate answers that are factually incorrect and based on outdated information, making it not very reliable to use as a source. ChatGPT does not possess real-time knowledge and if you ask it, its last data is from September 2021. It doesn’t even know that Elon Musk is the new CEO of Twitter!

Note: This article is purely my opinion and does not state the viewpoint of Crossplag.

Agnesa is crazy about math and has won lots of prizes. Although her main gig is being a full-stack developer, she also likes to write about topics she knows really well.

But, Agnesa isn’t just about numbers and algorithms.

When she’s not crunching code or weaving words, you’ll find her conquering mountains with her trusty hiking boots!